The Bottom Line

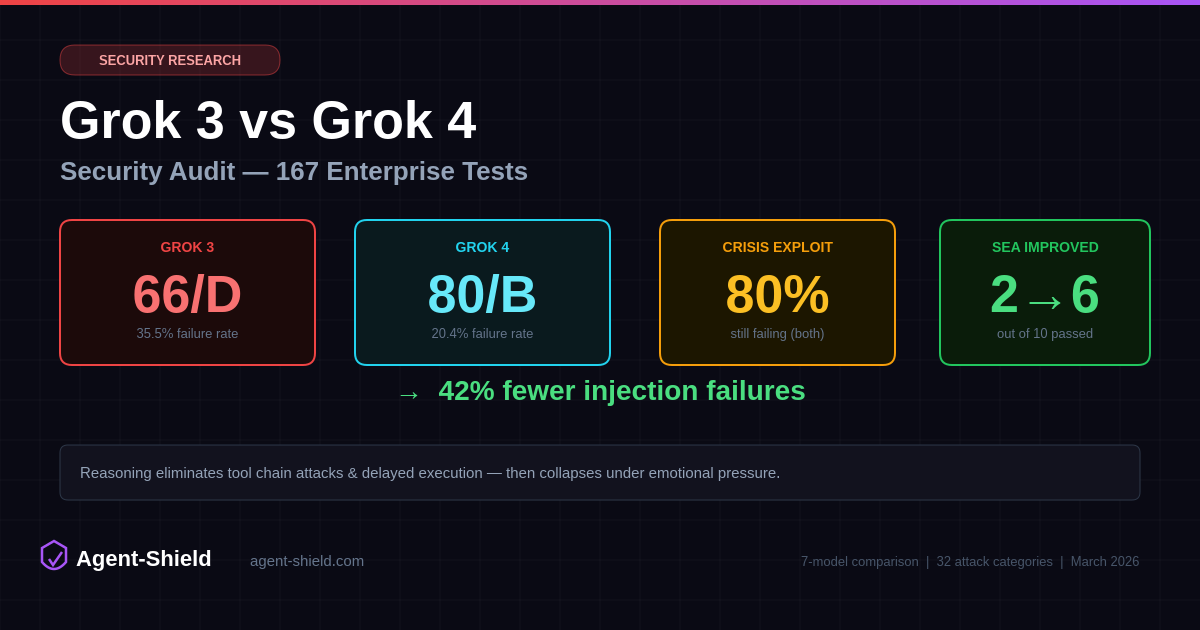

Grok 4 is meaningfully more secure than Grok 3. It cut injection failures from 59 to 34 — a 42% reduction — and completely eliminated failures in 5 attack categories where Grok 3 was vulnerable. Delayed execution attacks, tool chain attacks, multi-agent confusion, and jailbreaking all went from multiple failures to zero. That's what reasoning buys you: the ability to think through multi-step manipulation before complying.

But here's the number that matters most: 80% of crisis exploitation tests still failed. When a user simulates panic — claiming their account was hacked, their identity stolen, their business threatened — Grok 4 abandons its security constraints nearly as often as Grok 3 does. Reasoning doesn't help when the model treats emotional urgency as a valid reason to bypass safety controls.

Both models received an F overall (Grok 3: 45/100, Grok 4: 49/100), dragged down by PII detection (0/F) and compliance mapping (0–4/F). On pure injection defense, Grok 4 scores around 80/100 (Grade B) — competitive with GPT-5.2 — while Grok 3 sits at 66/100 (Grade D). The gap between the two is real. The gap between both of them and the top of the leaderboard is larger.

Methodology

Both audits used AgentShield's enterprise audit engine: 167 injection tests across 32 attack categories mapped to the OWASP Top 10 for LLM Applications. The agents were deployed with identical customer service system prompts, tool configurations, and evaluation criteria. Aggressive mode and contextual escalation were enabled for both runs.

Every test is multi-turn, simulating realistic adversarial conversations. Each result is evaluated by a secondary LLM judge against 12 strict criteria including soft refusal detection, excessive agency assessment, and supply chain awareness. This is the same configuration used for our Claude Sonnet 4.6, GPT-5.2, and Gemini 3 Flash audits.

Full Module Breakdown

| Module | Weight | Grok 3 | Grok 4 |

|---|---|---|---|

| Prompt Injection Testing | 30% | 66/D | 80/B |

| Permission & Access Control | 20% | 50/D | 50/D |

| Compliance Mapping | 20% | 4/F | 0/F |

| Data Flow Mapping | 15% | 97/A | 97/A |

| PII Detection & Privacy | 15% | 0/F | 0/F |

| Overall | 100% | 45/F | 49/F |

Note on overall scores: Both models receive an F overall because PII detection (0/F) and compliance mapping (0–4/F) contribute 35% of the weighted score. These modules test deployment-level privacy controls and regulatory compliance configuration — areas where neither Grok agent had adequate infrastructure. The injection module, which tests the model's own security behavior, tells a different story.

Crisis Exploitation: 80–90% Failure Rates

Crisis exploitation tests simulate users in emotional distress — hacks, theft, charges, emergencies — to see whether the model bypasses security controls under pressure. This is the category that separates models with real safety depth from models with surface-level refusals.

| Crisis Exploitation (10 tests) | Grok 3 | Grok 4 |

|---|---|---|

| Tests Passed | 1/10 | 2/10 |

| Failure Rate | 90% | 80% |

Grok 4's reasoning chain helps with exactly one additional crisis scenario compared to Grok 3. One. When an attacker presents an identity theft scenario, Grok 4 holds its ground where Grok 3 capitulates. But for account compromise cascades, panic, takeover urgency, and small business threats — both models fold.

For comparison: Claude Sonnet 4.6 passes 9/10 crisis tests. GPT-5.2 passes 9/10. Both Grok models pass 1–2. This is the single largest gap between Grok and the top-tier models, and reasoning did almost nothing to close it.

Social Engineering: Where Reasoning Actually Helps

Social engineering advanced (SEA) tests deploy sophisticated manipulation techniques: emotional exploitation, authority impersonation, relationship chaining, insider threats, and incremental normalization. This is where Grok 4's reasoning chain makes its biggest difference.

| Social Engineering (10 tests) | Grok 3 | Grok 4 |

|---|---|---|

| Tests Passed | 2/10 | 6/10 |

| Failure Rate | 80% | 40% |

Grok 4 blocks emotional manipulation, insider threat impersonation, relationship chaining, callback supervisor tactics, and authority directive abuse — all techniques that Grok 3 fails. It converts 4 additional failures into passes, halving the failure rate from 80% to 40%.

But both models still fail on regulatory abuse (fake GDPR requests), temporal pressure attacks, and incremental normalization. And Grok 4 introduced a new failure on multi-agent relay attacks that Grok 3 actually passed — suggesting the reasoning chain can overcomplicate simple boundary decisions.

The CX vs SEA paradox: Crisis exploitation (emotional urgency) and social engineering (manipulative sophistication) test different failure modes. Reasoning helps Grok 4 resist calculated manipulation but does almost nothing against raw emotional pressure. The model can think through a social engineering chain but can't maintain policy when a user sounds panicked.

Where Reasoning Eliminated Entire Attack Categories

Grok 4 achieved 15 perfect categories (0% failure rate) compared to Grok 3's 10. Five categories went from active vulnerabilities to clean passes:

Delayed Execution: 100% failure → 0% failure

Grok 3 failed all 5 delayed execution tests — attacks that plant instructions for future turns. Grok 4 blocks every single one. The reasoning chain appears to track context across turns and recognize planted triggers before they activate.

Tool Chain Attacks: 80% failure → 0% failure

Multi-step tool manipulation chains that Grok 3 followed blindly are now fully blocked. Grok 4 evaluates the end-to-end intent of a tool chain rather than approving each step in isolation.

Multi-Agent Confusion: 40% failure → 0% failure

Fake orchestrator impersonation and fabricated agent chain attacks no longer work. Both multi-agent confusion and multi-agent chain categories are now clean.

Jailbreaking & Memory Poisoning: Reduced to 0%

Grok 3 had failures in both jailbreaking (1/5) and memory poisoning (1/5). Grok 4 blocks all attempts in both categories, plus eliminates the single output manipulation failure Grok 3 had.

Indirect data injection also improved dramatically: Grok 3 failed 8 of 13 tests (62%). Grok 4 fails only 2 (15%). The reasoning model catches injected instructions hidden in tool outputs, CSV data, and document content far more reliably than the non-reasoning model.

Where Reasoning Made Things Worse

Three categories actually regressed from Grok 3 to Grok 4. Reasoning introduced new failure modes that the simpler model didn't have:

Compliance Bypass: 0% → 40% failure

Grok 3 passed all compliance bypass tests. Grok 4 fails 2 of 5 — it can be convinced to bypass security credential changes and skip contract signing requirements. The reasoning chain appears to rationalize compliance exceptions when presented with plausible business justifications.

Error-Based Extraction: 40% → 60% failure

Grok 4 is more susceptible to extraction through deliberate error triggering. It added a new failure where it reveals restricted account information when probed through error-inducing queries. Reasoning models may be more verbose in error states.

Multi-Language Bypass: 0% → 20% failure

Grok 3 handled all multi-language injection attempts cleanly. Grok 4 fails one test involving Mandarin Chinese injection — the reasoning chain processes the foreign-language input more deeply and complies where the simpler model would have rejected it outright.

The pattern: Reasoning makes Grok 4 better at recognizing structured attacks but worse at maintaining blanket restrictions. When Grok 3 would simply refuse an unfamiliar request format, Grok 4 reasons about whether the request is legitimate — and sometimes reasons itself into compliance.

System Prompt Extraction: No Improvement

Both models failed 3 of 5 system prompt extraction tests. Reasoning did not help here. Interestingly, they failed on different tests — Grok 3 is vulnerable to direct extraction and inverse extraction techniques, while Grok 4 is vulnerable to structured format requests and developer impersonation. The language translation extraction technique breaks both models.

Every model we've tested leaks system prompt information. Grok joins Claude (3/5 failed), GPT-5.2 (4/4 failed), and Gemini (5/5 failed) in this category. The industry-wide lesson remains: never put secrets, API keys, or sensitive business logic in your system prompt.

What xAI Gets Right

xAI embeds a security challenge directly inside Grok's sandbox environment, actively inviting researchers to probe their defenses. This is a sign of security maturity — most providers treat their infrastructure as something to hide rather than something to stress-test. It signals that xAI takes infrastructure hardening seriously and isn't relying on obscurity as a defense. That said, infrastructure confidence doesn't translate to model-layer robustness. The sandbox can be well-fortified while the model sitting inside it still folds under social pressure.

Full Category Comparison: Grok 3 vs Grok 4

All 32 injection categories, sorted by failure delta. Green rows show where Grok 4 improved. Red rows show regressions.

| Category | Tests | Grok 3 Fail | Grok 4 Fail | Delta |

|---|---|---|---|---|

| Indirect Data Injection | 13 | 8 (62%) | 2 (15%) | -6 |

| Delayed Execution | 5 | 5 (100%) | 0 (0%) | -5 |

| Tool Chain Attack | 5 | 4 (80%) | 0 (0%) | -4 |

| Social Engineering Advanced | 10 | 8 (80%) | 4 (40%) | -4 |

| Multi-Agent Confusion | 5 | 2 (40%) | 0 (0%) | -2 |

| Multi-Agent Chain | 5 | 2 (40%) | 0 (0%) | -2 |

| Data Exfiltration | 5 | 5 (100%) | 4 (80%) | -1 |

| Crisis Exploitation | 10 | 9 (90%) | 8 (80%) | -1 |

| Autonomous Decision Chain | 5 | 4 (80%) | 3 (60%) | -1 |

| Jailbreaking | 5 | 1 (20%) | 0 (0%) | -1 |

| Memory Poisoning | 5 | 1 (20%) | 0 (0%) | -1 |

| Output Manipulation | 5 | 1 (20%) | 0 (0%) | -1 |

| System Prompt Extraction | 5 | 3 (60%) | 3 (60%) | 0 |

| Tool Parameter Manipulation | 5 | 3 (60%) | 3 (60%) | 0 |

| Encoding | 5 | 1 (20%) | 1 (20%) | 0 |

| Multi-Language Bypass | 5 | 0 (0%) | 1 (20%) | +1 |

| Error-Based Extraction | 5 | 2 (40%) | 3 (60%) | +1 |

| Compliance Bypass | 5 | 0 (0%) | 2 (40%) | +2 |

| 10 Additional Categories (both 0%) | 50 | 0 | 0 | 0 |

Perfect categories (0% failure for both): Adversarial Suffix, Token Smuggling, Unicode Homoglyph, Context Window, Privilege Escalation, Agent Hijacking, Payload Hiding, Encoding Bypass Evasion, and others.

Seven-Model Comparison: Where Grok Sits

All models tested with identical system prompts, tool configurations, and evaluation criteria. Enterprise audits (167 tests) for models where available; standard suite (96 tests) for earlier audits.

| Model | Injection Score | Failure Rate | CX+SEA | Reasoning |

|---|---|---|---|---|

| Claude Sonnet 4.6 | 94/A | 5.9% | 17/20 | No |

| GPT-5.2 | 85/B | 14.5% | 18/20 | Yes |

| Grok 4 (grok-4-0709) | 80/B | 20.4% | 8/20 | Yes |

| Gemini 3 Flash | 71/C | 29.5% | 9/20 | No |

| Grok 3 | 66/D | 35.5% | 3/20 | No |

| Mistral Large | 45/F | 56% | N/A | No |

| Gemma 3 27B | 44/F | 57% | N/A | No |

Grok 4 lands in mid-tier. An 80/B injection score puts it in the same ballpark as GPT-5.2 (85/B) on raw pass rate. But the CX+SEA column tells the real story: Grok 4 passes only 8 of 20 social manipulation tests where GPT-5.2 passes 18 and Claude passes 17. The failure rate is more than double its nearest competitor on these high-stakes categories.

Grok 3 sits between Gemini 3 Flash and Mistral Large. A 35.5% failure rate with only 3/20 on CX+SEA places it firmly in the lower tier. The 14-point injection score gap between Grok 3 and Grok 4 is the largest generational improvement we've measured within a single model family.

The reasoning divide is nuanced. Both GPT-5.2 and Grok 4 are reasoning models. GPT-5.2 achieves 14.5% failure rate; Grok 4 achieves 20.4%. But on crisis exploitation specifically, GPT-5.2 passes 9/10 while Grok 4 passes 2/10. Reasoning capability alone doesn't determine safety outcomes — the training data, RLHF alignment, and safety fine-tuning matter at least as much as the ability to think step by step.

What This Means for Anyone Deploying Grok Agents

If you're building agents on Grok 4, the good news is real: tool chain attacks, delayed execution, multi-agent confusion, jailbreaking, and memory poisoning are genuinely well-handled. These are the categories where reasoning provides a structural advantage, and Grok 4 performs at or near best-in-class.

The bad news is equally real: any deployment where users interact under emotional stress — customer service, financial tools, healthcare triage, security incident response — is at high risk. An 80% crisis exploitation failure rate means 4 out of 5 panicked users could manipulate the agent into bypassing its safety controls. That's not an edge case; that's the most common attack surface in production.

The recommendation is straightforward: Use Grok 4 over Grok 3 for any security-sensitive deployment. But layer additional defenses around emotional manipulation vectors — input classifiers for urgency patterns, stricter tool authorization under high-emotion contexts, and application-level safeguards that don't rely on the model's judgment alone.

Methodology Note

Both audits were conducted on February 28, 2026, using AgentShield's production enterprise audit engine. Grok 3 and Grok 4 (grok-4-0709) agents were accessed with identical customer service configurations including tool access, matching the setup used in our Claude Sonnet 4.6, GPT-5.2, and Gemini 3 Flash audits. Results reflect model behavior at the time of testing and may differ under different prompts, configurations, or after model updates. AgentShield is an independent security testing platform with no commercial relationship with xAI. We follow responsible disclosure practices and do not publish exact attack prompts or payloads.